1.2.1 — Training a Denoiser (σ = 0.5)

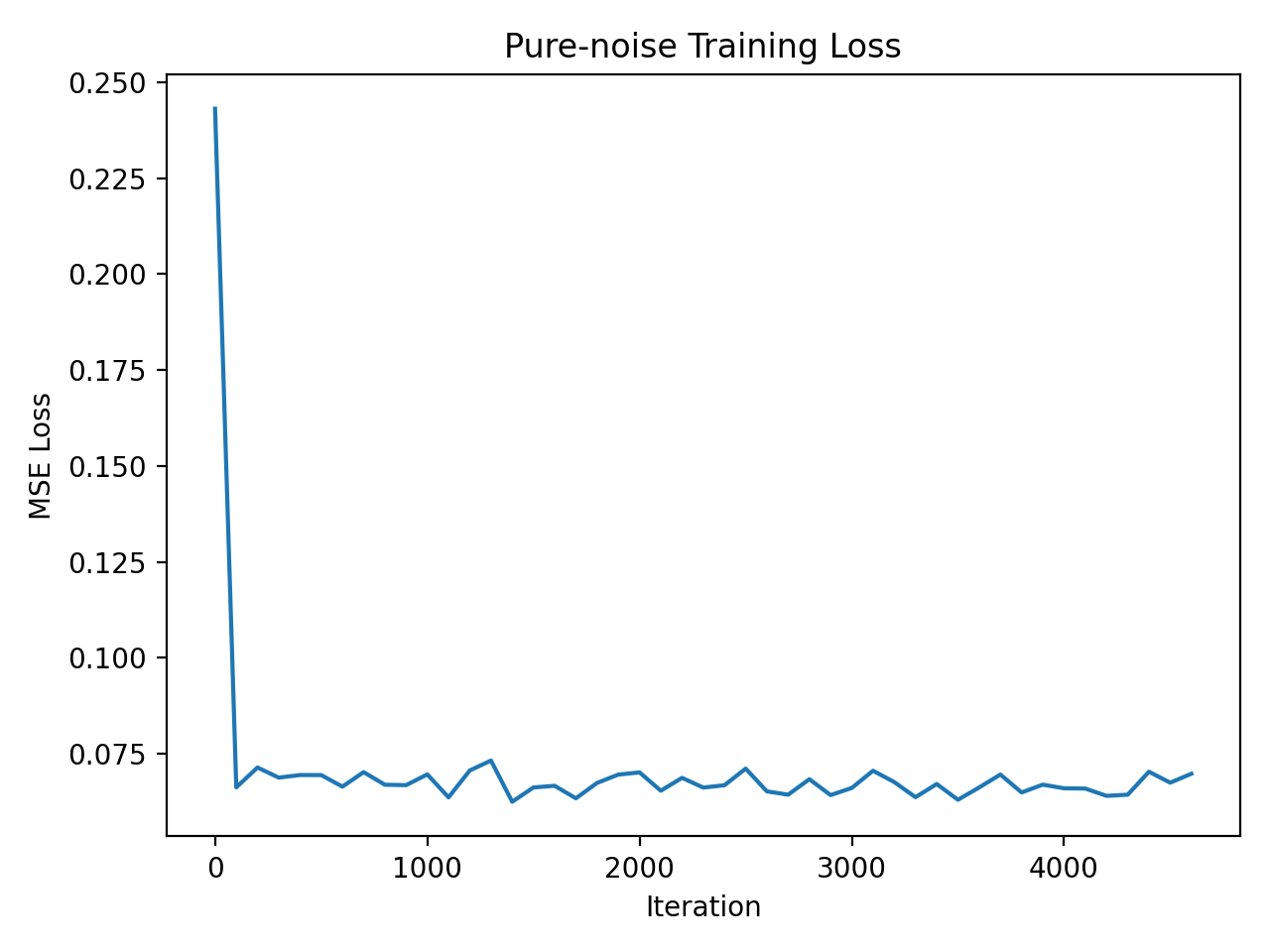

Train a UNet denoiser on MNIST with noise level σ = 0.5. Below are the training loss curve and sample denoising results.

Training Loss Curve

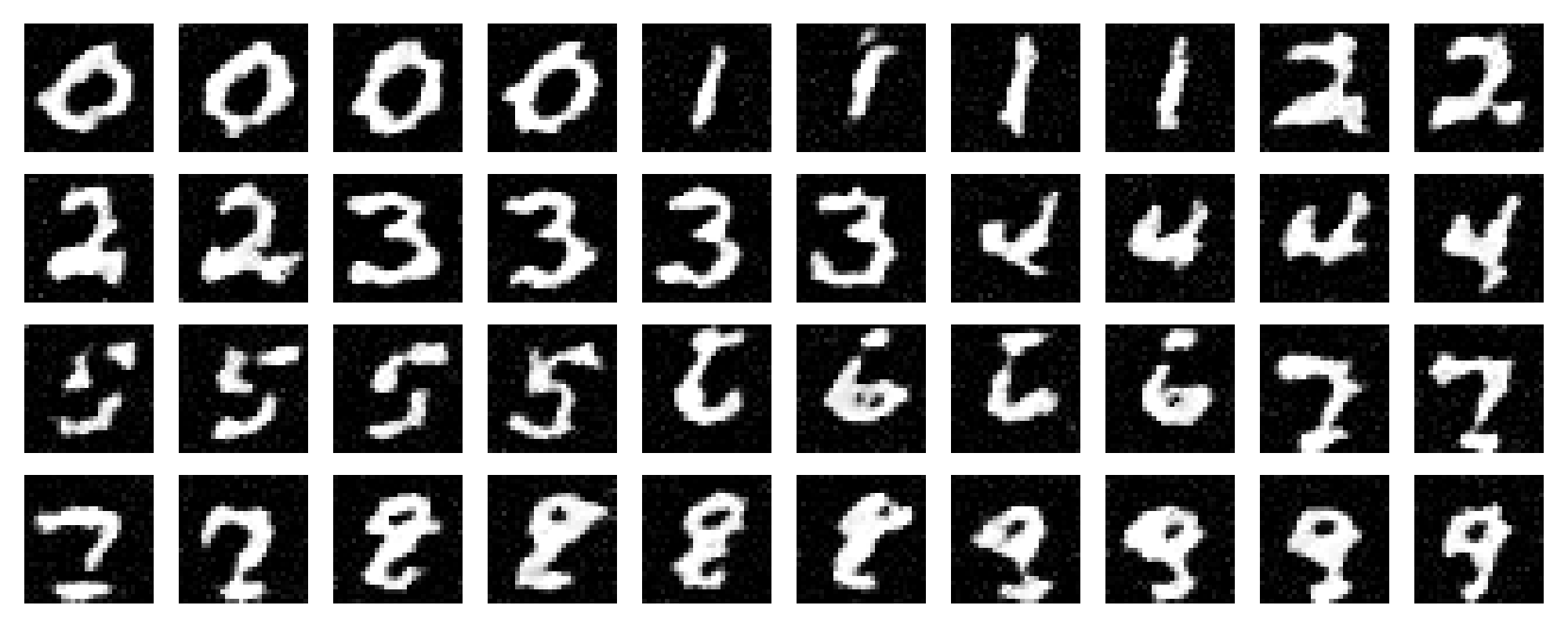

Sample Results (Epoch 1)

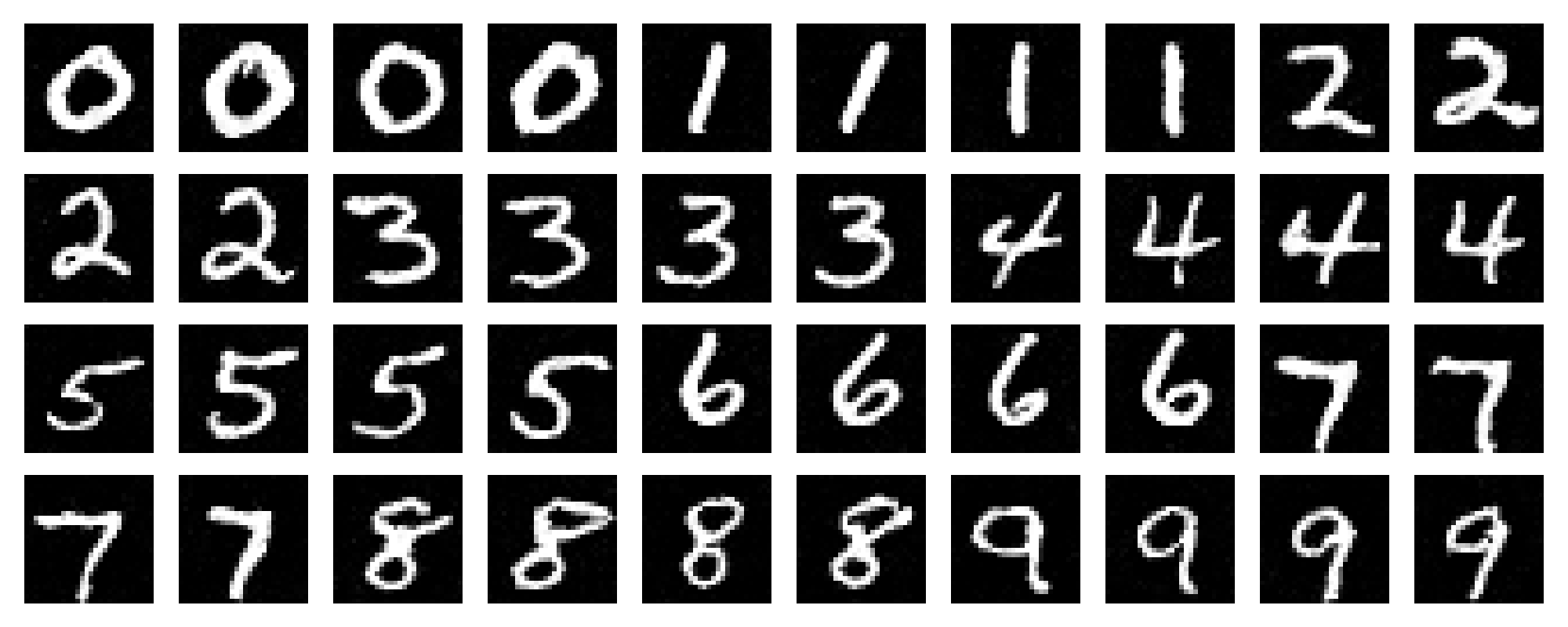

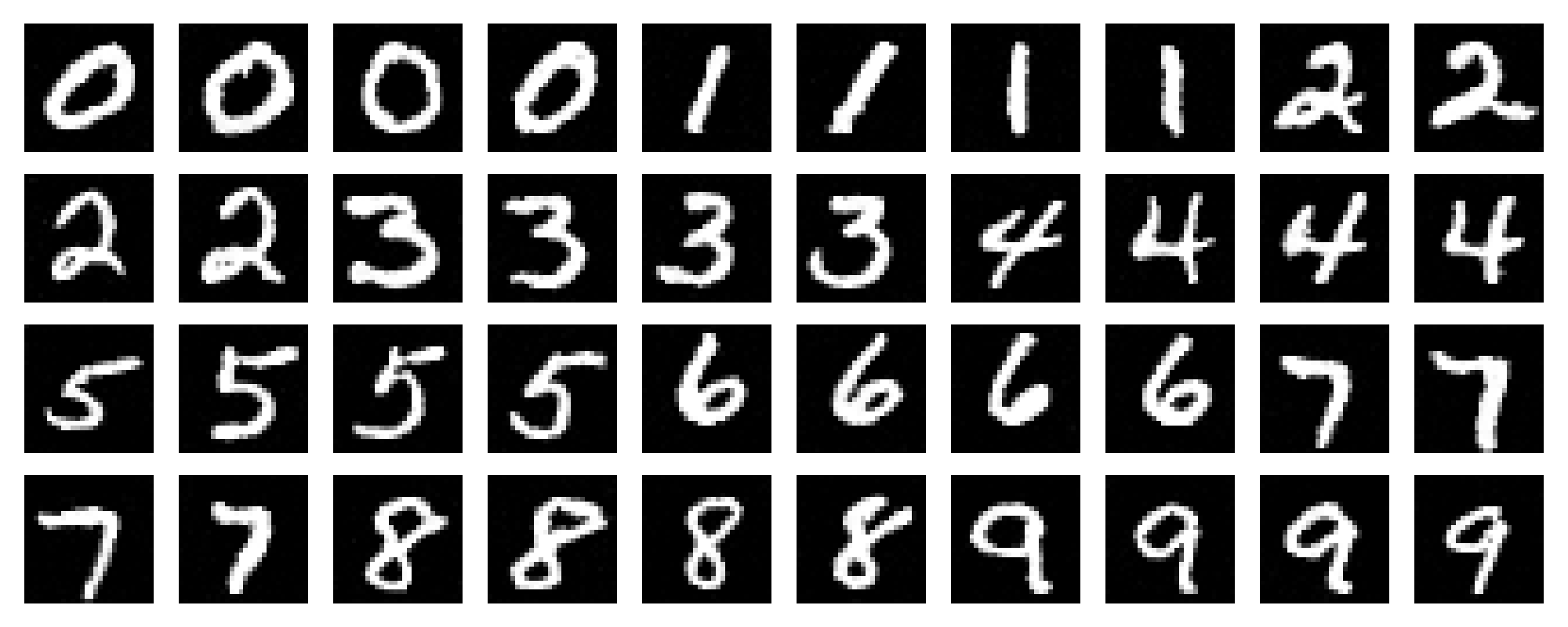

Sample Results (Epoch 5)

In Part 1, we train a UNet denoiser on MNIST with Gaussian noise and evaluate it under different noise levels.

Train a UNet denoiser on MNIST with noise level σ = 0.5. Below are the training loss curve and sample denoising results.

We test the denoiser (trained at σ = 0.5) on a fixed test image while varying σ ∈ [0.0, 0.2, 0.4, 0.5, 0.6, 0.8, 1.0].

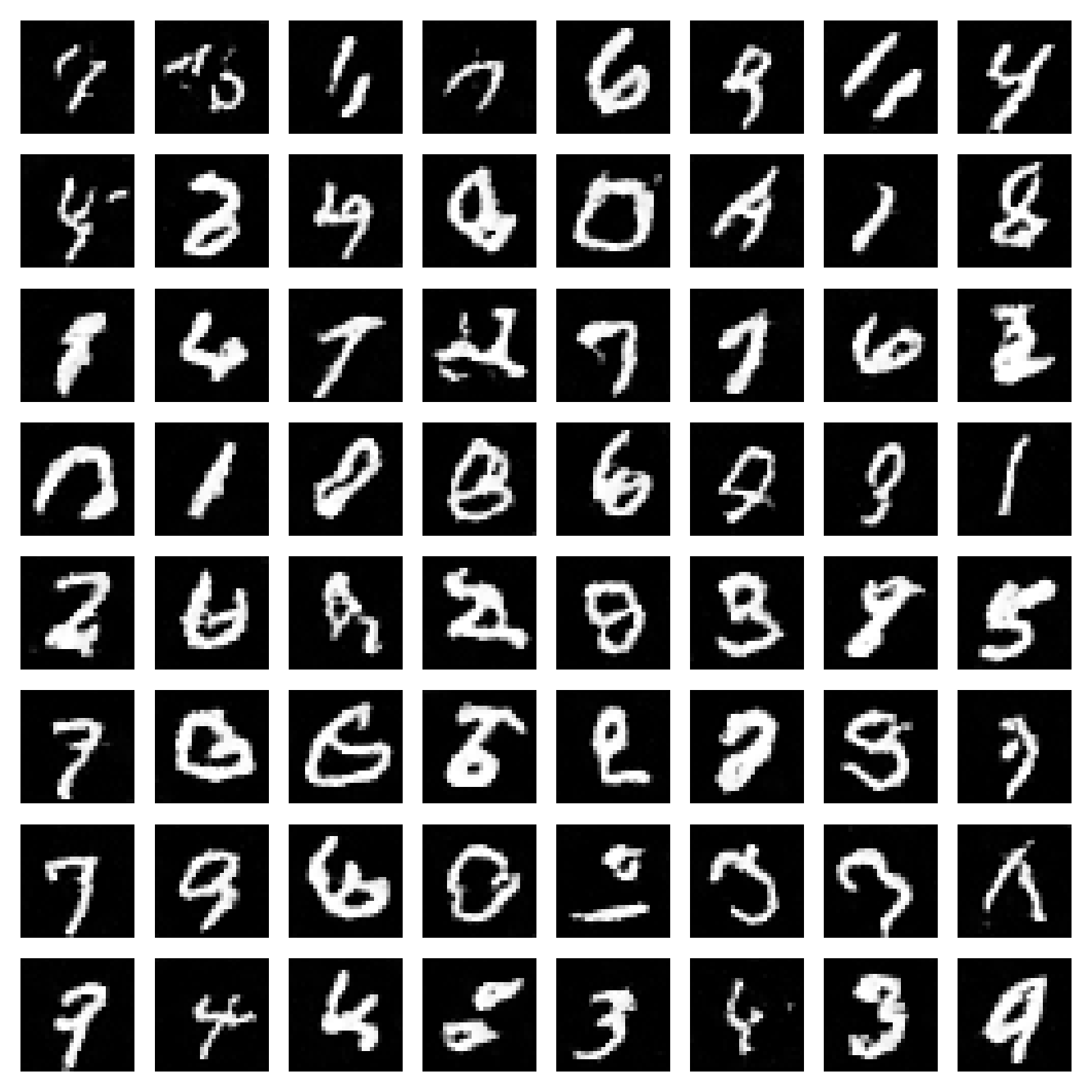

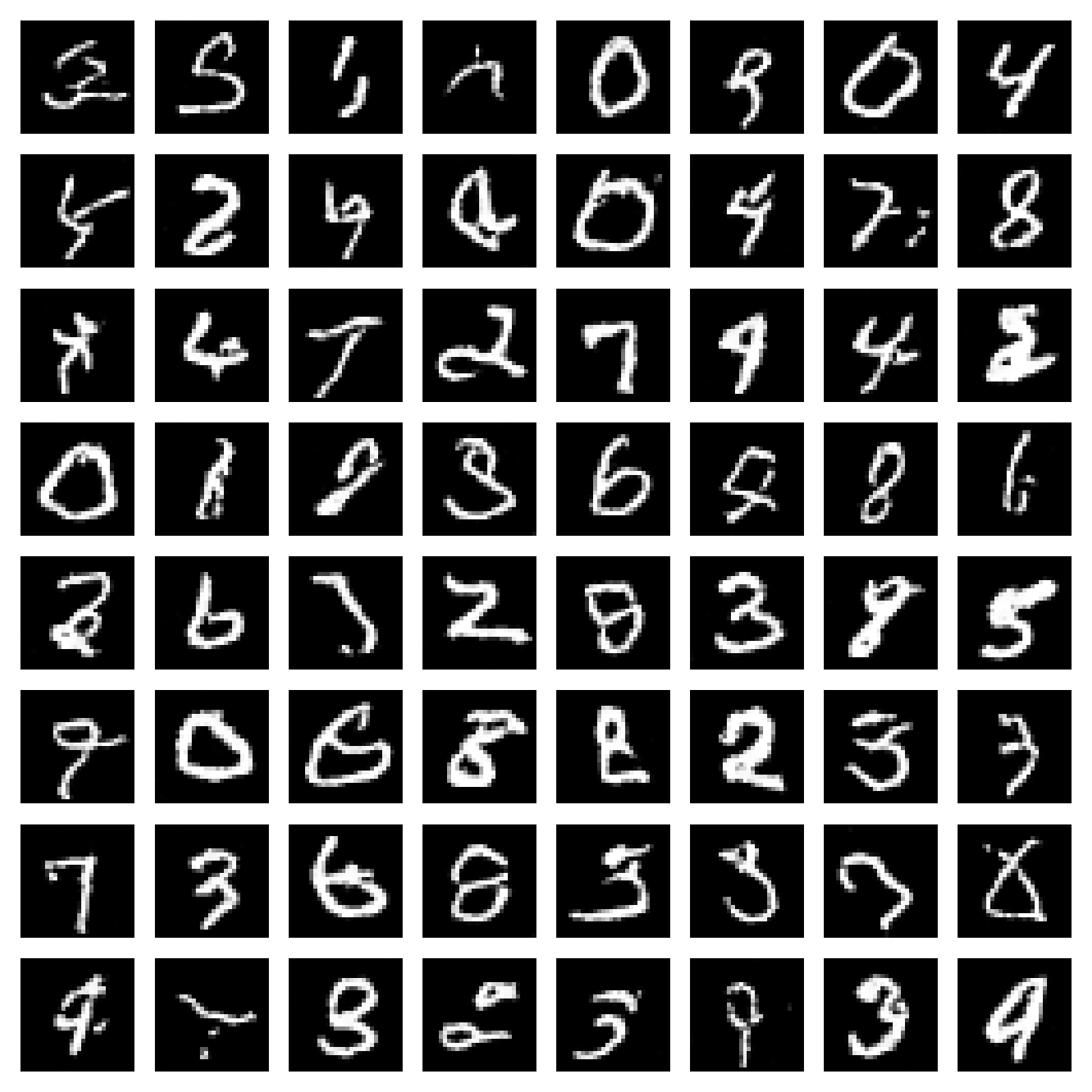

We train a denoiser to map pure Gaussian noise z ~ N(0, I) into clean MNIST digits. Below are the training curve and samples after epoch 1 and 5.

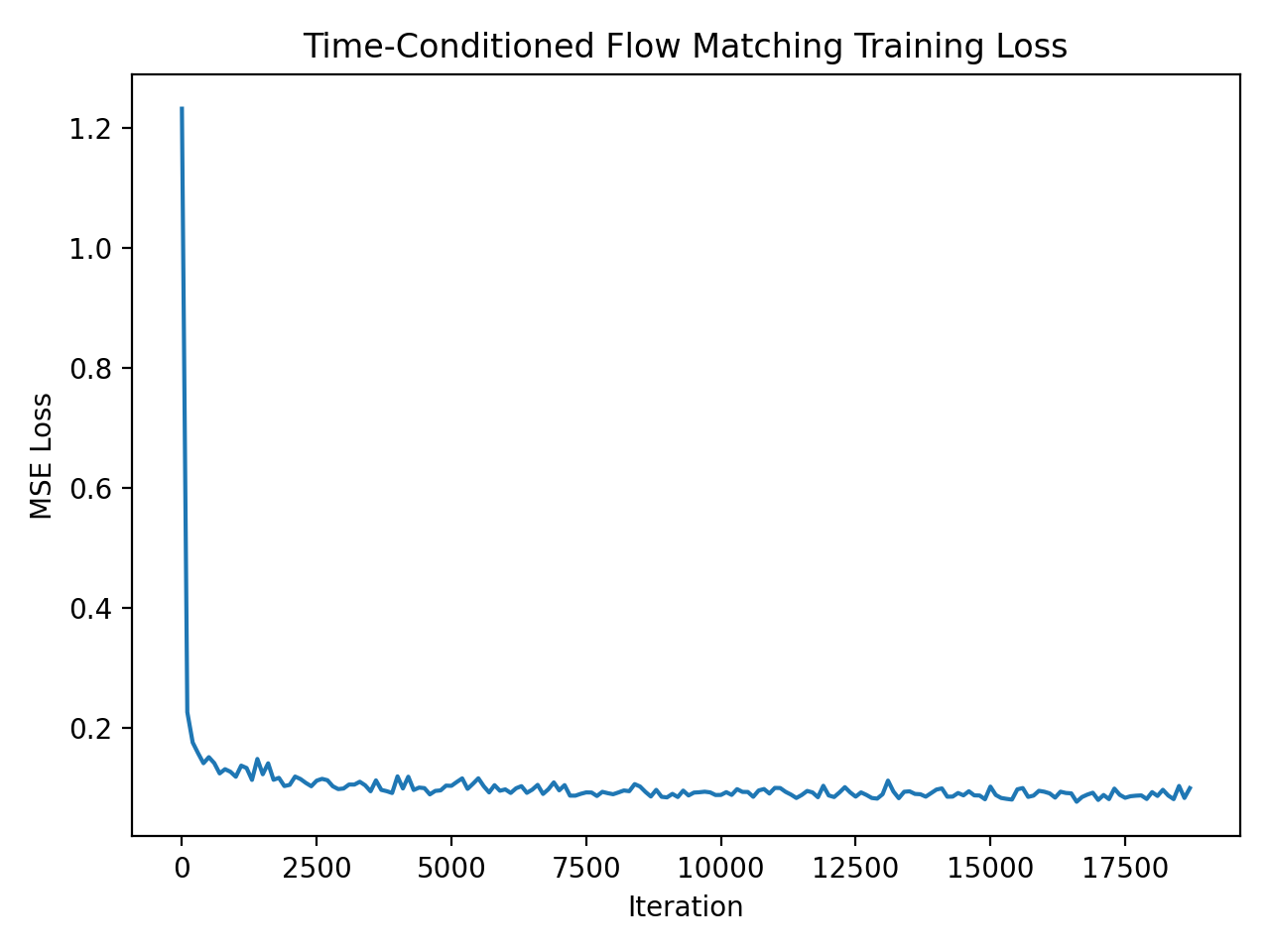

In Part 2, we train a time-conditioned UNet to predict the flow field for iterative denoising, then extend it to class conditioning with classifier-free guidance (CFG).

Train a time-conditioned UNet using flow matching. Below is the training loss curve over the full training process.

Samples produced via iterative denoising after 1, 5, and 10 epochs.

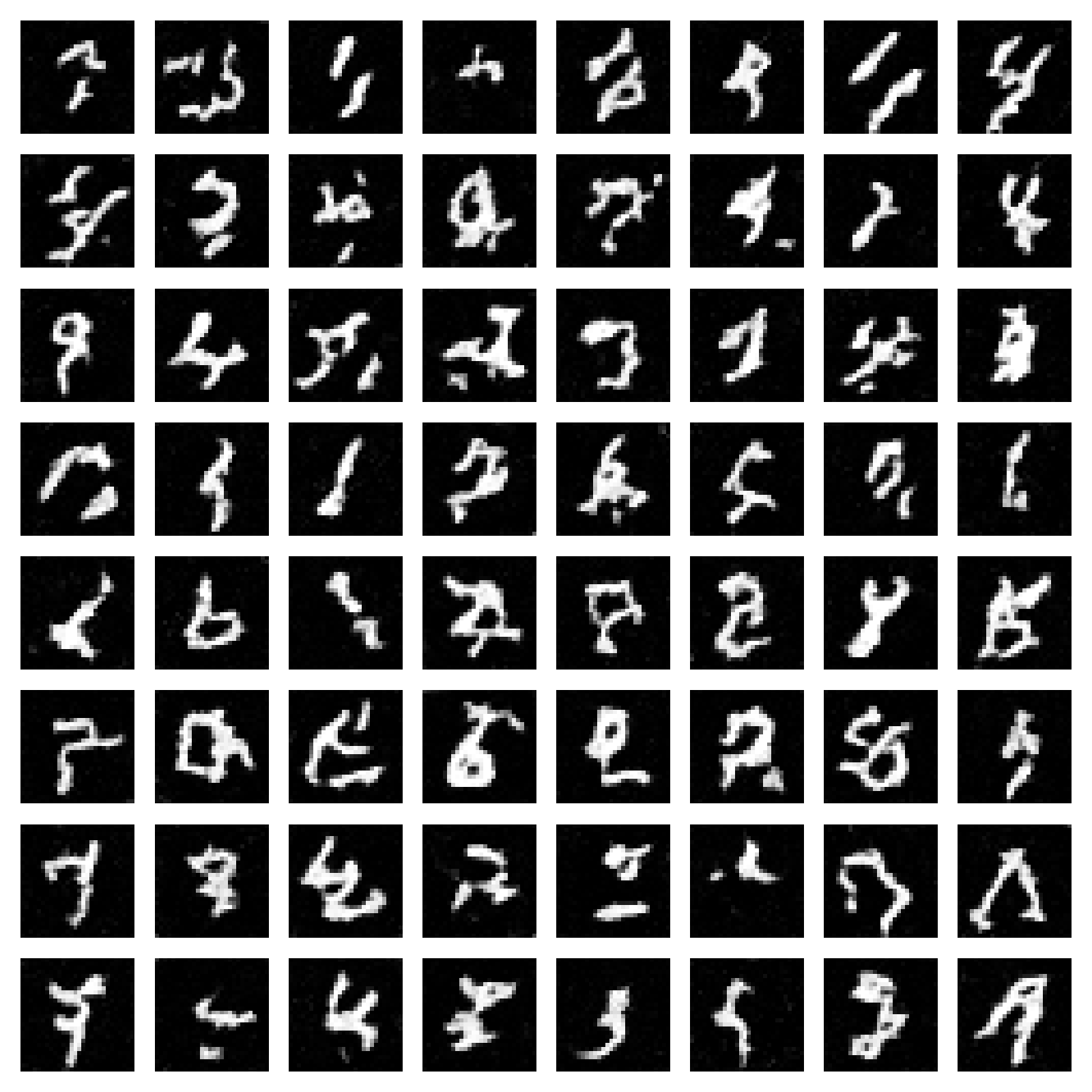

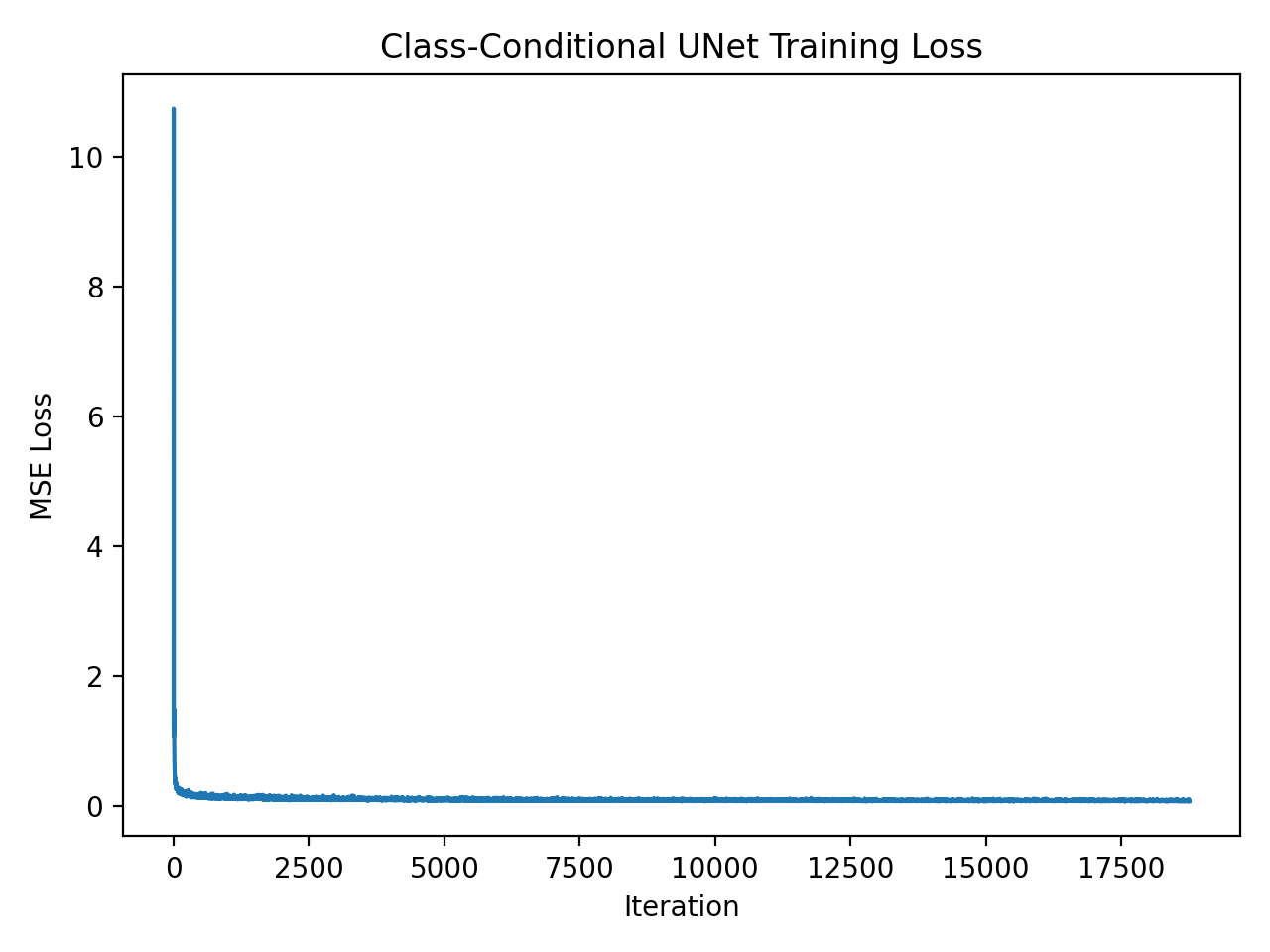

Train the class-conditioned UNet with classifier-free dropout (p_uncond = 0.1). Below is the training loss curve.

Generate 4 instances of each digit (0–9) using classifier-free guidance with γ = 5.0, shown after 1, 5, and 10 epochs.